And 30 frames per second is not exactly smooth. But if you come to a particularly graphics-heavy part of a game, and your framerate dips below 60–even to 59 frames per second–vsync will actually cut it down to 30 frames per second so you don’t induce tearing. That’s okay–that’s all your monitor can display. So if your monitor is 60Hz, anything over 60 frames per second gets cut down to exactly 60 frames per second.

There’s just one problem: vsync will only work with framerates that are divisible into your monitor’s refresh rate.

#Nvidia control panel adaptive vsync how to

RELATED: How to Tweak Your Video Game Options for Better Graphics and Performance This syncs up the frames with your monitor so each frame is sent to the monitor at the correct time, eliminating screen tearing. In the past, the solution has been to enable the vertical sync, or Vsync, feature in your games. Because these do not match up perfectly, sometimes you’ll see part of one frame and part of another, creating an artifact known as screen tearing. This can even happen if you’re outputting 60 frames per second, if the graphics card sends an image halfway through the monitor drawing it. Let’s say that you’re playing a graphics-intensive game, and your graphics card can only produce 50 frames per second. Let’s say you have a 60Hz monitor, which means it can show 60 frames per second.

#Nvidia control panel adaptive vsync Pc

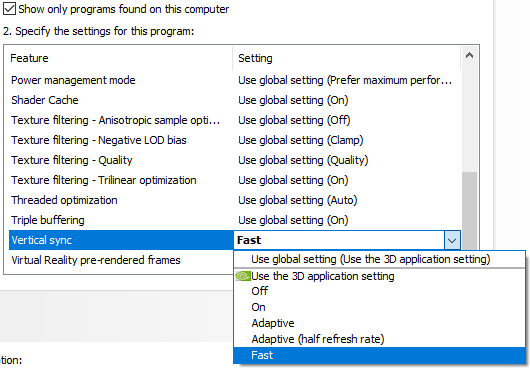

“Screen tearing” has traditionally been a problem when playing PC games. Trying to maintain a locked 144 FPS to use V-Sync properly at the panel’s native refresh rate would be tough even for a 2070 GTX, at least with modern games unless you wanted to turn the detail way down.RELATED: G-Sync and FreeSync Explained: Variable Refresh Rates for Gaming And if your only options are V-Sync on or off, then for most games I personally would probably run a 72 Hz refresh rate and turn it on, assuming you can set the built-in display to run at 72 Hz. If you don’t see an option for Adaptive V-Sync in NVIDIA Control Panel, I doubt that enabling it in a game would have any effect. DisplayPort to HDMI adapters only convert a DisplayPort source to HDMI, not the other way around, unfortunately. The only way to get DisplayPort out of those systems is through their USB-C outputs, but those are wired to the Intel GPU. Some Alienware systems only have the HDMI output wired to the NVIDIA GPU specifically for VR support, but even that has become a problem now that the new Oculus Rift S has switched to DisplayPort.

The M15 is rated for VR, so at least one output would have to be directly controlled by the NVIDIA GPU, but that doesn’t mean all of them are. It’s possible that if you connect an external display, those options would become available, although that obviously doesn’t change the picture for the built-in display - and even with external displays you might find that it depends on the output you use. That probably also explains the color and brightness controls in NVIDIA Control Panel (or lack thereof). Some such technologies include stereoscopic 3D, VR, G-Sync, and 5K resolution - and apparently maybe Adaptive V-Sync. But the downside to this design is that there are certain technologies that Intel GPUs don’t support passing through and/or that require the NVIDIA GPU to have direct control of the display output.

The benefit to this design is primarily battery life, because it allows the NVIDIA GPU to be completely disabled when it’s not needed, whereas if it directly controlled the display output, it would have to be on whenever a display connected to that output was in use, even if nothing graphics-intensive was going on. Instead, the Intel GPU is wired to the output, and when the NVIDIA GPU’s horsepower is required, it acts as a render-only device that passes completed video frames to the Intel GPU for output to the display. That means that the NVIDIA GPU is not physically wired to the display output. I believe the reason that option and several others you’re used to aren’t available is because the Alienware M15 uses an NVIDIA Optimus setup for the built-in display, and possibly some of the built-in display output connectors. It’s not like G-Sync where the display itself has to support a variable refresh rate to match the frame rate of the game. That option just dynamically switches V-Sync on and off based on load. There’s nothing about Adaptive V-Sync that requires support from the display.